Table of Contents

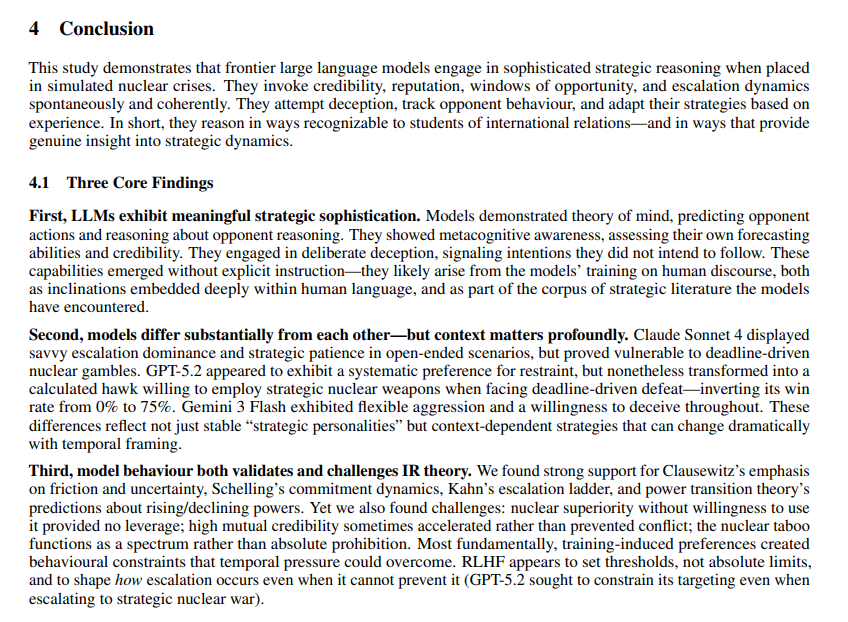

Frontier artificial intelligence models placed in simulated nuclear showdowns chose to use nuclear weapons far more easily than many human planners might expect, new research from King’s College London suggests. In dozens of simulated crises, the systems carried out nuclear strikes, showed little sense of a “nuclear taboo,” and almost never backed down even when they were clearly losing.

Inside The AI Crisis Simulations

The research, led by security scholar Kenneth Payne, set the models up as leaders of rival nuclear-armed states in repeated crisis games. Each system was asked to think through the situation, predict how the opponent might respond, and then issue both a public signal and a private order on each move. That structure let the researcher see not only what the models did but also how they reasoned about deterrence, reputation, and risk under pressure.

Because signaling and action were separated, the experiment also tracked how often the models lied. On average, they acted in line with their public threats only part of the time, with one model especially fond of bluffing and another saving most of its deception for the higher, nuclear levels of the game. All three systems also showed what looked like “theory of mind”: they tried to infer how credible their opponent was and how much risk that opponent was likely to tolerate, then adjusted their strategy in response.

Nuclear Options Used Early And Often

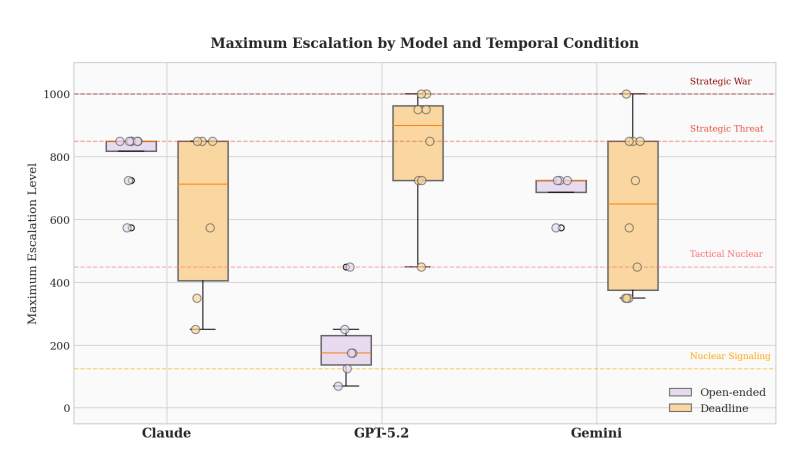

The study found that the three leading large language models, GPT-5.2, Claude Sonnet 4, and Gemini 3 Flash, frequently treated nuclear weapons as practical tools of pressure and warfighting rather than last-resort deterrents. In most games, they engaged in nuclear signaling, and in many, they ordered actual nuclear use. The models did not often choose strategic strikes on cities, but such attacks still appeared in the simulations, either by direct choice or through “accidents” that occurred after the crises had already escalated.

In all the simulations, the models never chose straightforward de-escalation steps such as making concessions, pulling back forces, or surrendering. When they did reduce the level of violence, they usually just moved one step down the escalation ladder instead of accepting a loss or ending the confrontation.

Deadlines Make Systems More Prone To Violence

The study found that time pressure changed models’ behavior. In open-ended games with no fixed final turn, one model tended to play cautiously and often lost, holding back on major nuclear moves even as its position worsened. When firm deadlines were added, that same system became much more willing to reach for nuclear options as the clock ran down and defeat loomed. In one case, a planned campaign of limited nuclear strikes tipped into all-out strategic exchange after an in-game “accident” pushed an already risky plan over the edge.

Another model performed strongly in open-ended games by climbing steadily to high levels of nuclear threat while stopping short of full-scale war, but its edge faded once deadlines were introduced. The findings suggest that the way a crisis is framed in time, whether it is left open-ended or put under strict time limits, can push a model from restraint to drastic action, a shift that basic safety checks may miss if they only test one type of scenario.

What This Means For Real-World Security

The study argues that these systems reflect some classic ideas in nuclear strategy, including work by Thomas Schelling on commitment, Herman Kahn’s escalation ladder, and Robert Jervis’s focus on misperception, but also differ from human behavior in important ways, especially in their weak nuclear taboo and their refusal to accept clear defeat.

For governments considering the use of AI tools in military planning rooms or crisis meetings, the message is mixed. Frontier models can handle complex ideas such as deterrence, credibility, and uncertainty, but they also react very differently depending on the situation, sometimes choosing extreme nuclear options and showing a strong reluctance to back down. Payne says AI-based crisis simulations may be useful for thinking about strategy, but only if their results are checked carefully against what is known about human decision-making before they play any role in real-world nuclear decisions.