Table of Contents

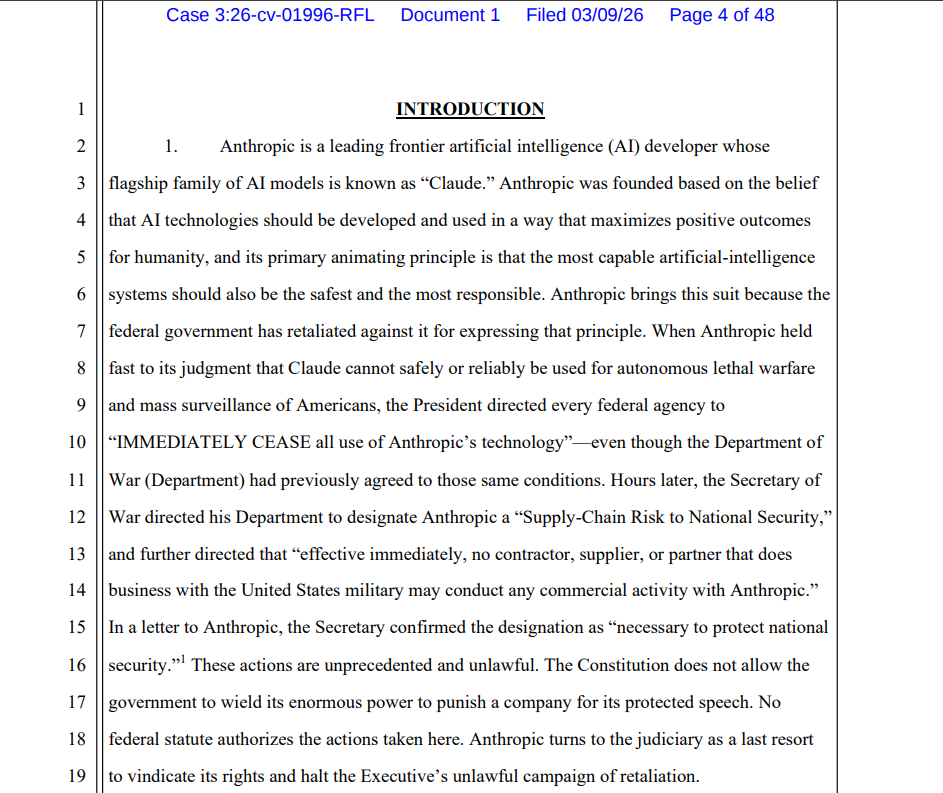

Artificial intelligence (AI) company Anthropic has sued the Trump administration, accusing the federal government of unlawfully trying to drive it out of public-sector work after the firm refused to let its Claude models be used for lethal autonomous weapons and mass surveillance of Americans.

In a complaint filed in federal court in San Francisco, Anthropic said President Donald Trump and Secretary of War Pete Hegseth mounted a coordinated campaign of retaliation, including a public order that every federal agency “IMMEDIATELY CEASE” using the company’s technology and a Pentagon designation labeling Anthropic a “Supply-Chain Risk to National Security.”

The lawsuit names the Department of War, the Treasury, State, Health and Human Services, Commerce and other agencies, as well as a long list of cabinet-level officials, and seeks declaratory and injunctive relief to block the actions.

Anthropic Says Punishment Follows Refusal on Weapons and Surveillance

Anthropic, an AI company based in San Francisco, develops the Claude family of large language models and has positioned itself as a leading “frontier” AI lab with a focus on safety. The company says its long-standing usage policy bars two categories of government use: fully autonomous lethal warfare and mass surveillance of Americans.

The complaint states Anthropic has never trained or tested Claude to direct lethal force without human oversight and does not believe the system can “safely or reliably” perform that function. It also argues that using Claude to analyze data on Americans at scale would create outsized risks of error and abuse given the model’s speed, reach, and potential for mistakes or “hallucinations.”

Anthropic says it became one of the Pentagon’s most trusted AI providers, creating “Claude Gov” models tailored for national security work and securing placement of its systems on classified networks. Claude is described in the filing as the Department of War’s most widely deployed frontier model and the only such system currently operating on its classified systems.

According to the complaint, the relationship soured in late 2025 when the Pentagon pushed to strip out Anthropic’s usage restrictions and replace them with a blanket commitment to allow “all lawful use” of Claude across present and future deployments. Anthropic agreed to loosen many civilian limits for defense customers but refused to drop its two core prohibitions.

Trump Directive and Supply-Chain Risk Label

The filing recounts a February 24, 2026, meeting in which Hegseth allegedly gave Anthropic chief executive Dario Amodei an ultimatum: accept the “all lawful uses” clause within days or face either forced compliance under the Defense Production Act or expulsion from the defense supply chain as a supposed national security risk.

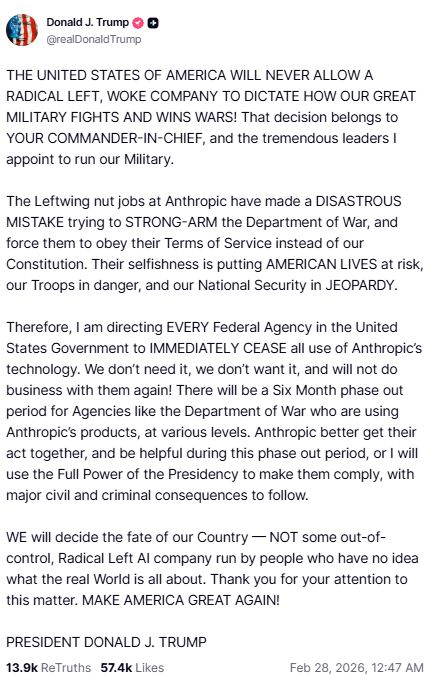

After Amodei publicly said Anthropic could not “in good conscience” accept those terms but stood ready to help the Pentagon transition to another provider, Trump posted a directive on social media on Feb. 27 ordering “EVERY Federal Agency” to stop using Anthropic products and denouncing the company as a “RADICAL LEFT, WOKE” outfit of “Leftwing nut jobs.”

Hegseth followed with his own post the same day, declaring a “final” decision to have the Department of War designate Anthropic a “supply chain risk to national security” and stating that no contractor, supplier, or partner that does business with the U.S. military “may conduct any commercial activity with Anthropic” while still requiring the firm to support the department for up to six months.

Anthropic says other agencies quickly moved to comply, with the General Services Administration terminating its “OneGov” contract and dropping the company from certain federal purchasing vehicles, while Treasury, the Federal Housing Finance Agency, State, and HHS told staff to stop using Claude.

On March 4, Hegseth sent Anthropic a two-page letter, dated March 3, formally notifying the company of the supply-chain designation under 10 U.S.C. § 3252 and stating that excluding Anthropic from covered procurements was “necessary to protect national security” and that “less intrusive measures are not reasonably available.”

Anthropic Invokes First Amendment and Administrative Law

Anthropic argues that the Pentagon’s “supply-chain risk” label and the president’s directive are unprecedented measures that violate multiple provisions of U.S. law.

The complaint says the War Department’s actions exceed its authority under Section 3252 and were adopted without required procedures. It also calls them arbitrary and capricious, noting that they run counter to years of positive security vetting and official praise for Claude’s capabilities.

The company’s central claim is that the government punished it for protected speech, in violation of the First Amendment. Anthropic says it has a constitutional right to express its views on AI safety and the limits of its own technology, and federal officials cannot wield contracting power and national security labels to force it into abandoning those positions.

The lawsuit also claims that the government’s actions violate due process and are illegal penalties under the Administrative Procedure Act because they prevent Anthropic from doing government business without proper legal authority.

The company says it faces immediate and irreparable harm through canceled contracts, damage to its reputation as a safety-focused AI lab, and a significant impact on its advocacy.

Anthropic asks the Northern District of California to declare the presidential and Pentagon actions unlawful and to block federal agencies and defense contractors from enforcing them while the case proceeds.