The U.S. military relied on artificial intelligence tools from Anthropic during its latest airstrikes on Iran, only hours after President Donald Trump ordered federal agencies to stop using the company’s technology, according to the Wall Street Journal.

Banned AI Tool Still at the Center of Iran Campaign

The report said Anthropic’s Claude system is used to help analysts sort through intelligence, pick out possible targets and run battle simulations, showing how deeply it is built into U.S. military planning even as the administration moves to sever ties with its developer.

According to people familiar with the matter, Anthropic’s Claude AI is used by military commands worldwide, among them U.S. Central Command, which oversees Middle East operations, although Centcom would not say which systems are supporting the current campaign against Iran.

Months of Friction Over How AI Can Be Used

The airstrikes come after months of friction between Anthropic and the Pentagon over safeguards on Claude, with the company refusing Defense Department pressure to allow “any lawful use” of its software, including potential roles in mass domestic surveillance and fully autonomous weapons.

On Friday, Trump ordered federal agencies to halt cooperation with Anthropic and begin removing its products, while the Defense Department labeled the company a security risk and a vulnerability to its supply chain, a designation that restricts how contractors can work with the firm. Administration officials were also angered by Anthropic’s lobbying on AI policy and its links to groups associated with Democratic donors.

Previous Role in Maduro Capture Complicates Phase-Out

Claude’s role in earlier high-profile missions, including the U.S. operation to capture Venezuelan President Nicolás Maduro, helps explain why the administration set a six-month phase-out rather than ordering an immediate shutdown, as analysts say removing Claude from existing systems will be technically complex and could still take months even on an accelerated schedule.

Pentagon Turns to OpenAI and xAI

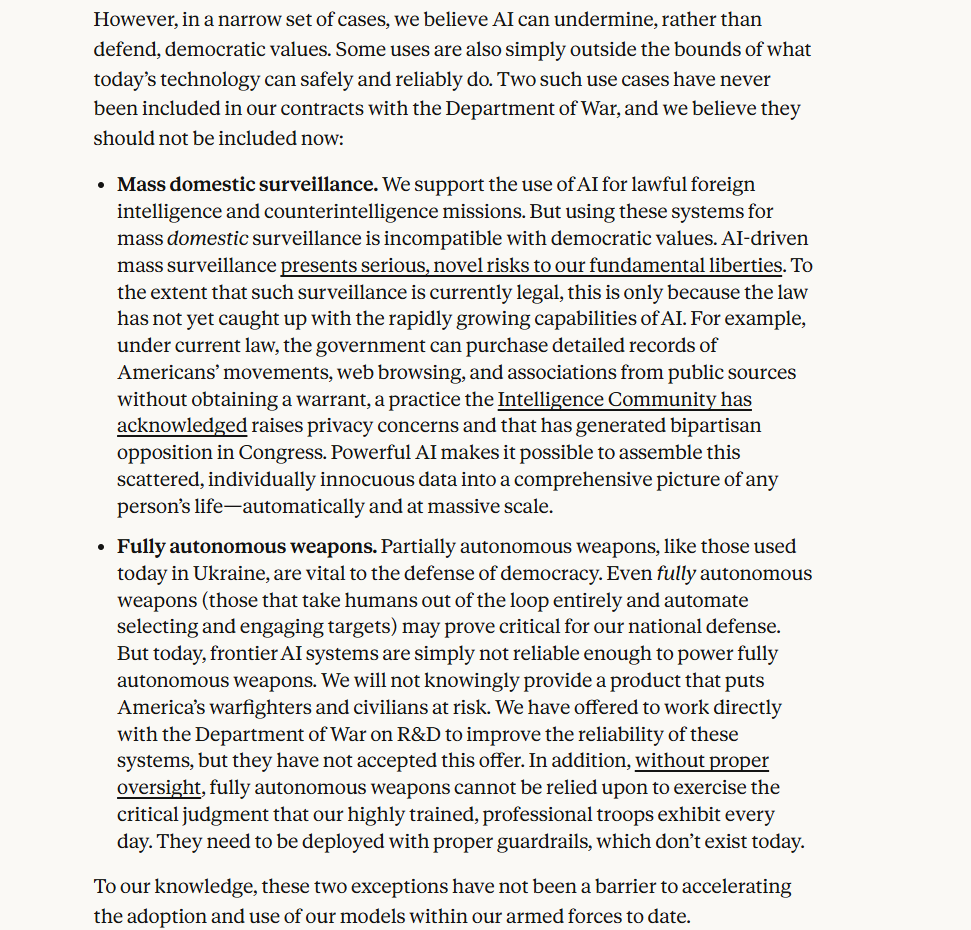

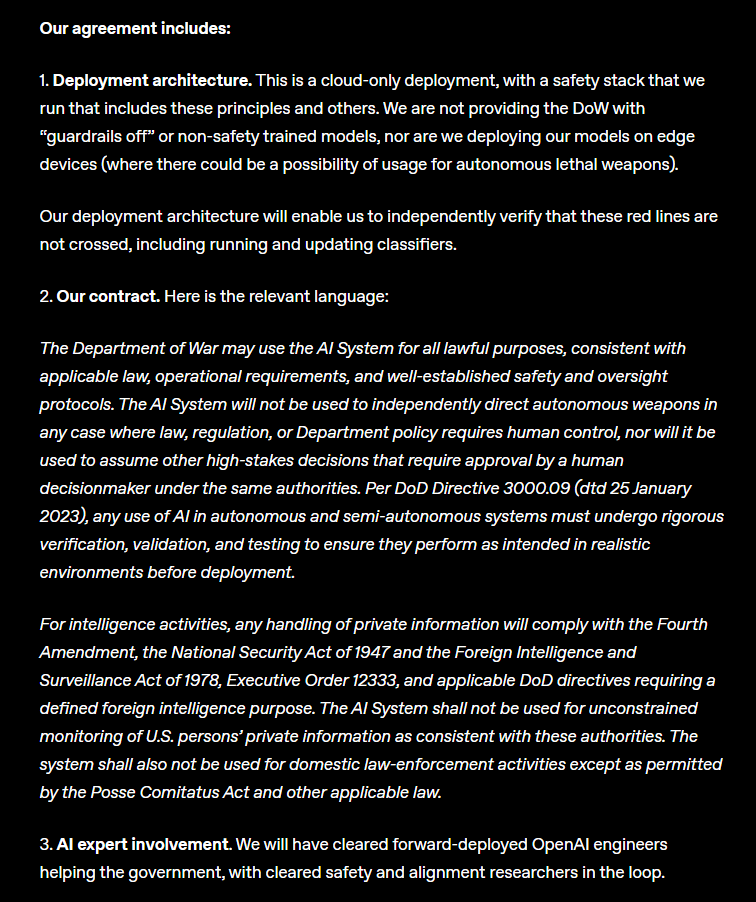

As relations with Anthropic broke down, the Pentagon turned to other AI suppliers, signing an agreement with OpenAI to deploy its models on the U.S. Department of War’s classified cloud networks, with the company stressing three “red lines”: no use for domestic mass surveillance, no role in directing autonomous weapons, and no replacement of humans in other high-stakes decisions.

OpenAI says it will retain control over safety systems, keep deployment cloud-based with its own cleared staff in the loop, and reserve the right to terminate the deal if those limits are breached.

At the same time, the Pentagon has struck a separate arrangement with Elon Musk’s xAI to bring its Grok model into classified military systems used for sensitive intelligence analysis, weapons development and battlefield operations. xAI has accepted the department’s “all lawful purposes” standard that Anthropic rejected, giving the military broad flexibility over how Grok can be used and prompting concern from some officials and outside experts about safety and oversight.