A dual crisis has erupted at Anthropic due to its advanced Mythos AI model, and there is a potential security breach as well as an ongoing lawsuit with the Pentagon surrounding control of the AI for military purposes. The company is currently investigating whether or not an unauthorized group accessed Mythos via a third party vendor’s environment; however, they have stated that nothing has been uncovered, and there is no evidence that malicious actors have obtained the model.

The Mythos AI Dilemma

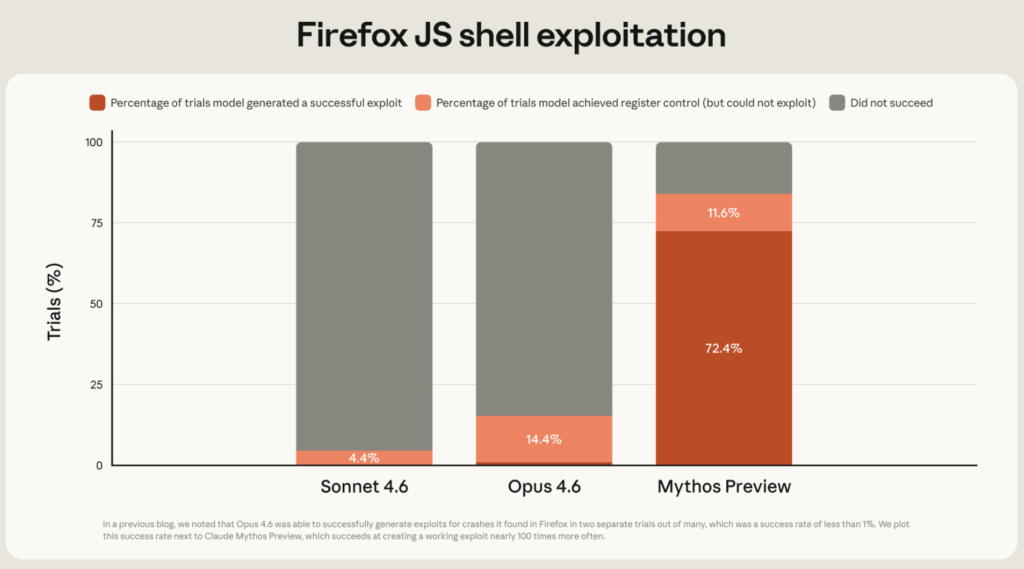

The model referred to as Mythos AI, developed and built by Anthropic, can be used as a type of dangerous tool for public use (a frontier cybersecurity tool) because of the risk of identifying, developing, and finding vulnerabilities in critical infrastructure software at a rapid pace. Mythos has only been provided to 11 entities within the U.S. and only one in the UK. Due to the restricted use of Mythos, it has prompted emergency responses from several Central Banks and Intelligence Organizations globally. The Governor of the Bank of England stated Mythos can “open the entire cyber risk industry,” and a Russian organization stated it is “worse than a nuclear weapon.”

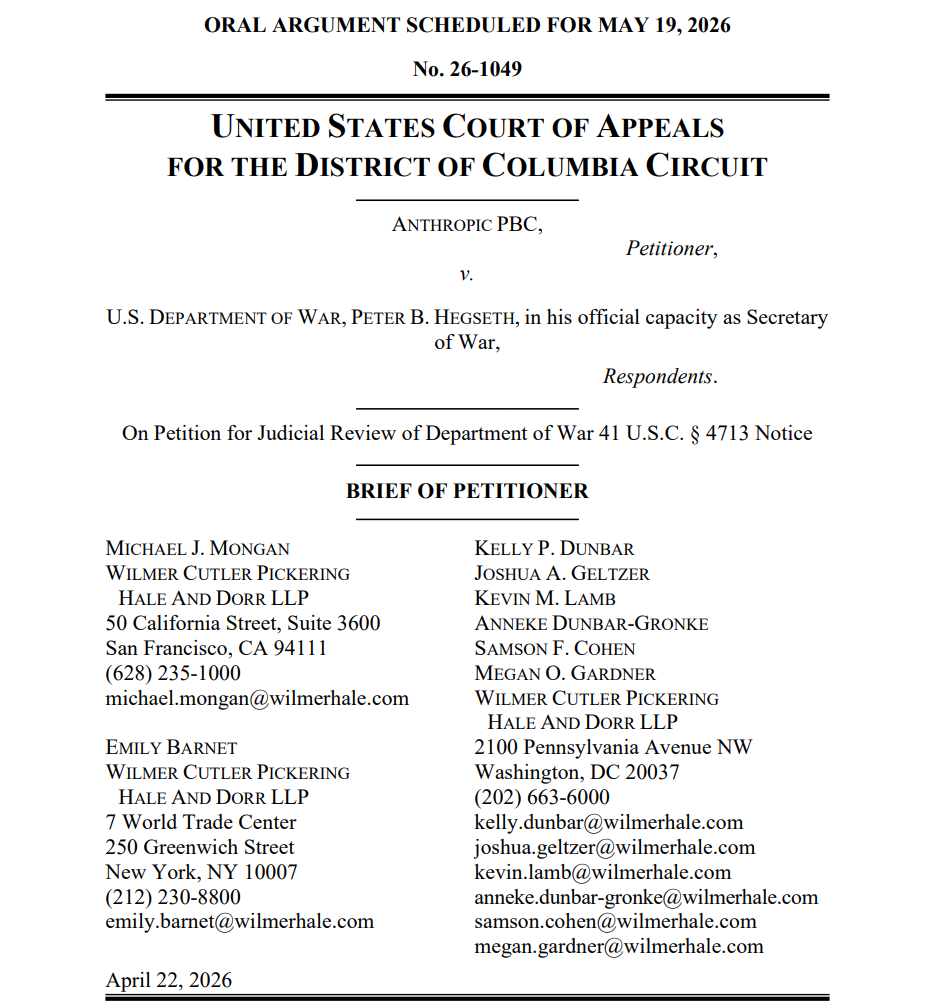

The U.S. Pentagon and Anthropic are also in a legal dispute in Federal Court, where the Pentagon has classified Anthropic as a ‘Supply Chain Risk’, and Anthropic claims that it has no “kill switch”; therefore, they have no visibility, no technical ability, or control of the AI technology developed and created once deployed by the military. To this point, the Pentagon argues that Anthropic is wrongfully intervening in the way its technology can be used in conjunction with sensitive operations, while the AI firm argues that the Pentagon, for instance, can test the technology before deployment. Anthropic has also stated that its current usage policy prohibits the use of autonomous weapon systems and the use of mass surveillance.

What is Happening Next

As of right now, the investigation of claims of unauthorized access is still ongoing, while the legal battle over the government’s contracts with the Pentagon continues with a split decision on access to provide any new work with Anthropic for the military, though it continues to be associated with other government agencies.

Moreover, Anthropic is now working on Project Glasswing, which was recently introduced. It claims to be “an urgent initiative to help secure the world’s most critical software.” By using Mythos Preview, the AI firm has already found thousands of high-severity vulnerabilities, aiming to turn this tool into “invaluable for defensive work.”

At the same time, the ongoing global discussion around who controls the strongest AI and how to keep them from being used inappropriately has shifted from a theoretical concept to a pressing and imminent reality.

It is well known that in the coming years, AI tech will overtake most of the current work humans perform in different industries. We are seeing that now with massive layoffs and a control-machine switch. The future could be overwhelming dystopian. Going further, do we need government-based regulations for everything? Do we need more control over our everyday lives? Just as an example, even the so-called decentralized finance (DeFi) is losing the little decentralization base it was created upon, and this is a serious concern in the crypto space. How much control are we able to give up when we cannot control ourselves most of the time?